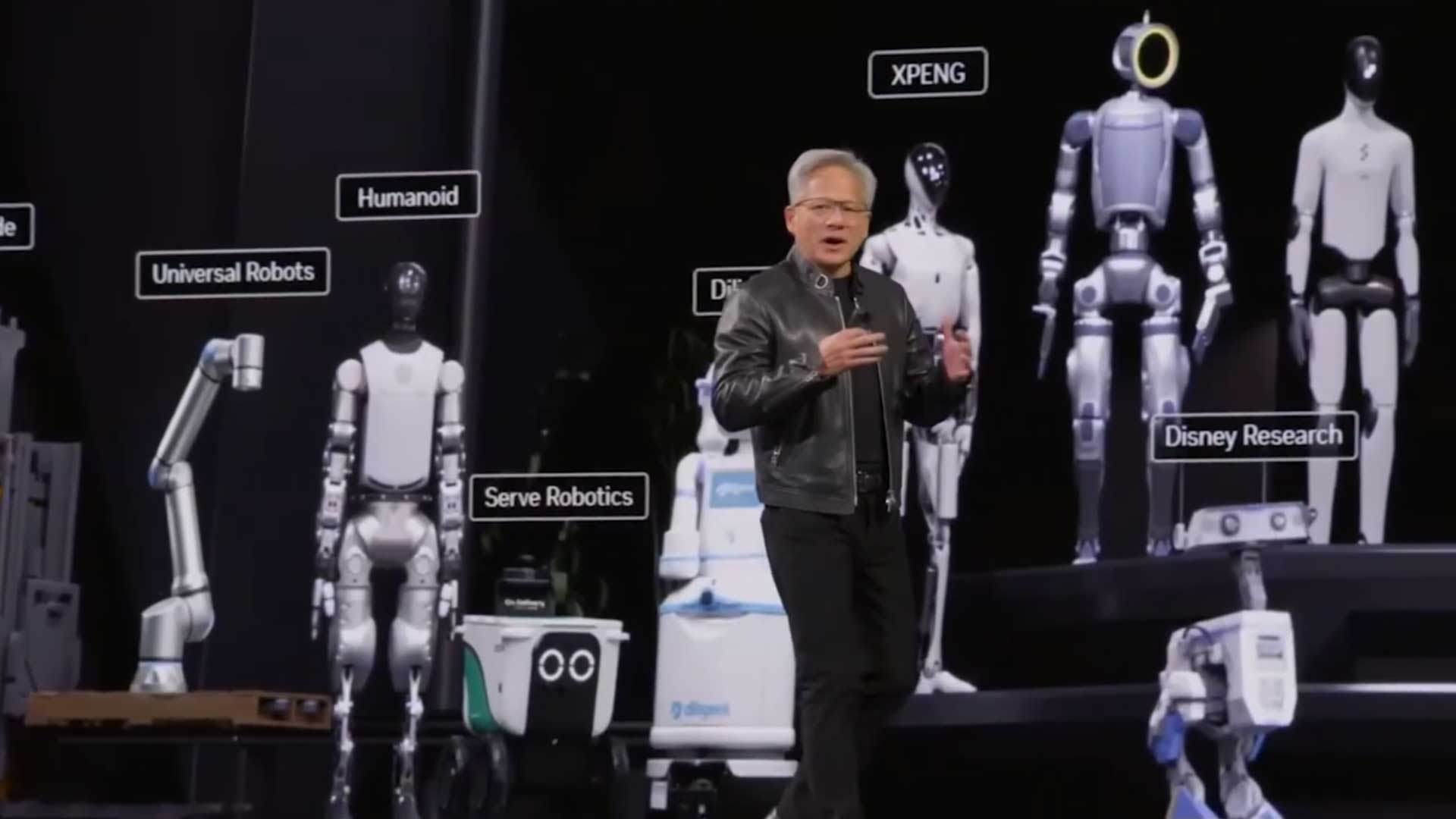

NVIDIA GTC 2026 was one of the biggest robotics moments of the year, and MANUS was proud to be part of it. From Jensen Huang's opening keynote to a live session on the GTC stage, from an exclusive hands-on demo space to deep integrations with NVIDIA's latest robotics stack, GTC 2026 validated what we've been building toward: precision hand tracking as the foundational human input layer for the next generation of teleoperation and embodied AI.

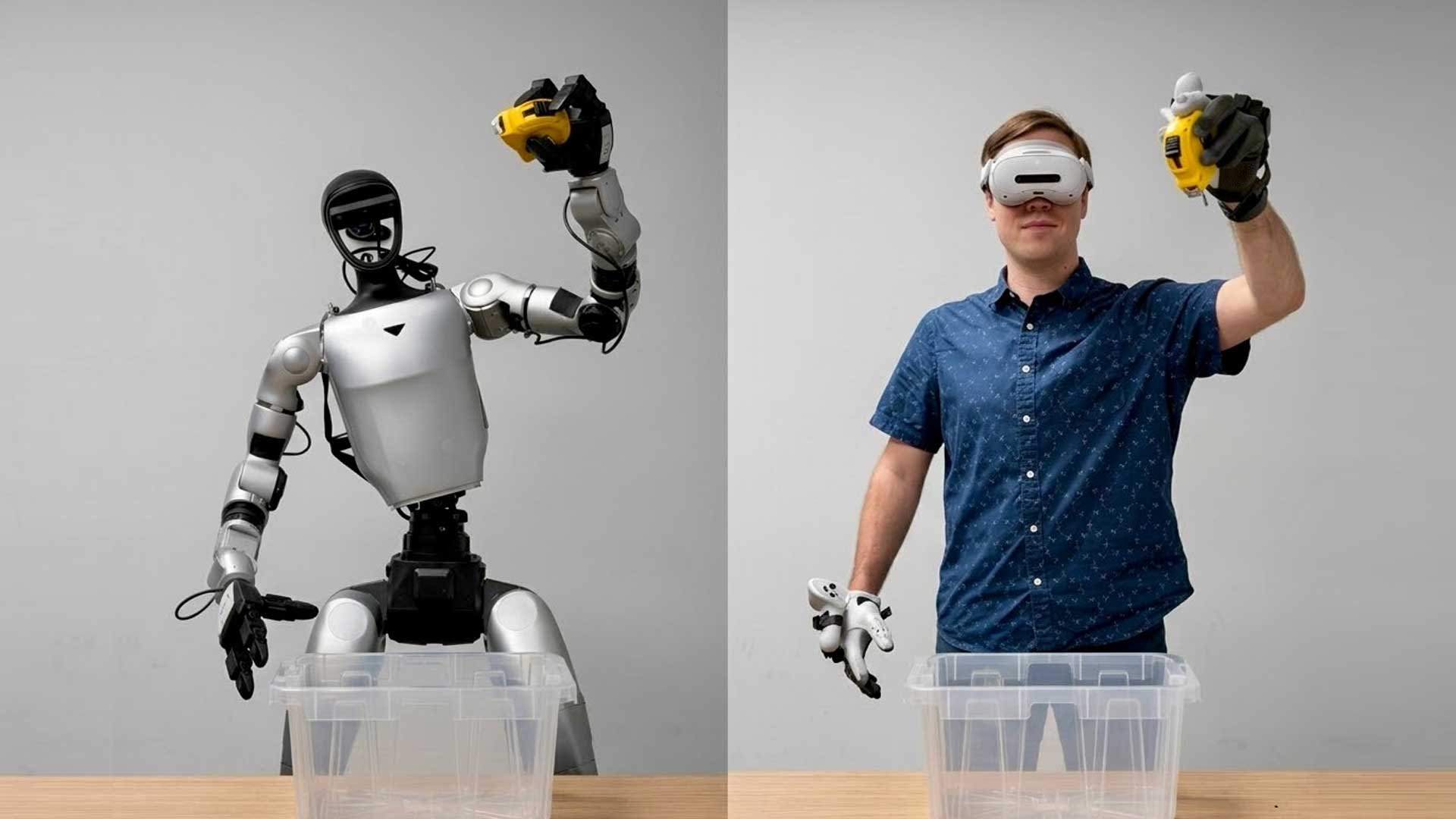

The week opened with MANUS gloves appearing in Jensen Huang's keynote — for the second consecutive year. In the opening video, an operator wearing MANUS gloves teleoperated a NEURA Robotics humanoid in real time, translating natural hand motion into dexterous robot actions.

MANUS Co-Founder & CTO Maarten Witteveen took the GTC stage to discuss scalable teleoperation pipelines, joined by NVIDIA Distinguished Engineer Jiwen Cai and Lightwheel Chief Architect Jay Young. Maarten covered how to connect human motion input via MANUS gloves through the MANUS SDK into Isaac Sim and Isaac Teleop, capturing structured demonstration data that transfers cleanly between simulation and physical systems. The session made a practical case for why standardized tooling at the human input layer is the unlock for scalable robot learning.

At GTC 2026, NVIDIA open-sourced Isaac Teleop: a unified framework for teleoperation and structured demonstration data collection across both simulation and physical systems. MANUS gloves are an official supported input device within this framework, streaming hand and finger tracking data through the MANUS SDK into a standardized pipeline that retargets human motion into robot actions and captures reusable demonstration data.

Learn more about MANUS with NVIDIA Isaac Teleop integration here.

Steps away from the main venue, MANUS hosted an exclusive private demo space where attendees could go hands-on. The centerpiece was the real-time teleoperation of the Sharpa Wave, a 22-DoF dexterous robotic hand, using MANUS gloves. The setup demonstrates high fidelity data capture that makes teleoperation genuinely useful for training embodied AI systems.

During the GTC week, robotics expert Dr. Scott Walter got hands-on with the Sharpa Wave teleoperation setup, testing real-time object interaction with MANUS gloves. The session demonstrated how combining tactile sensing with precise finger tracking improves manipulation performance in ways that matter for real-world deployment.

Sharpa also hosted tech content creators Ilir Aliu and The Humanoid Hub, who spent time teleoperating the Sharpa Wave with MANUS gloves.

At Analog Devices (ADI)'s booth during GTC, MANUS gloves serve as the human motion capture layer in ADI's simulation-based training pipeline. An operator wearing the glove teleoperates a simulated dexterous hand; the IPC physics engine generates real-time tactile sensor data and deformable contact feedback in response. The resulting paired dataset of human motion alongside simulated tactile response trains ADI's anti-slip grasping algorithm, closing the sim-to-real gap for contact-rich robotic manipulation.

Precision hand tracking is becoming the foundational layer connecting human expertise to robot capability, and GTC 2026 made that case in the most visible way possible: on Jensen Huang's keynote stage, inside NVIDIA's official robotics framework, and in the hands of the researchers, engineers, and builders who are deploying these systems today. The work continues, and we're building the infrastructure that makes it possible. If you're working on teleoperation, embodied AI training, or dexterous manipulation and want to see what MANUS gloves can do in your pipeline, we'd love to talk. Contact our team.