In current robotic research, multiple approaches exist for human-to-robot teleoperation, including vision-based tracking, motion capture systems, VR interfaces, and exoskeleton suits. However, there was no standardized framework to compare these methods objectively and consistently.

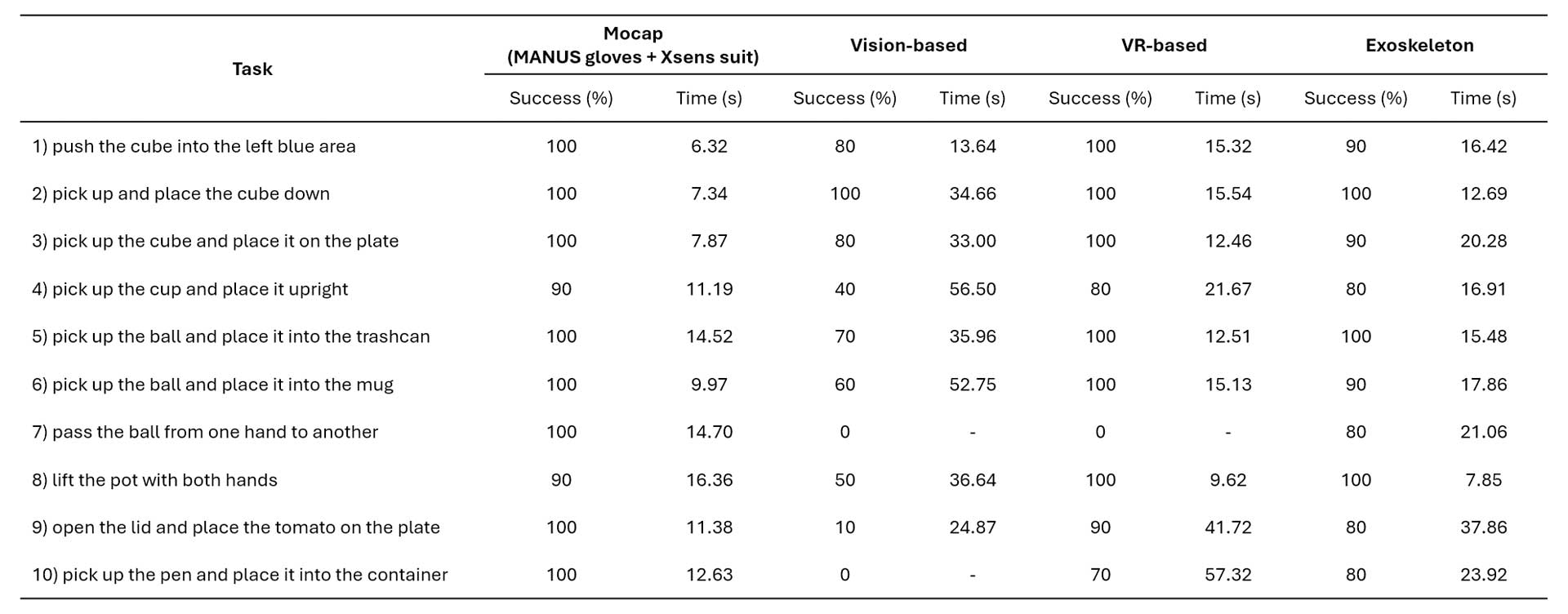

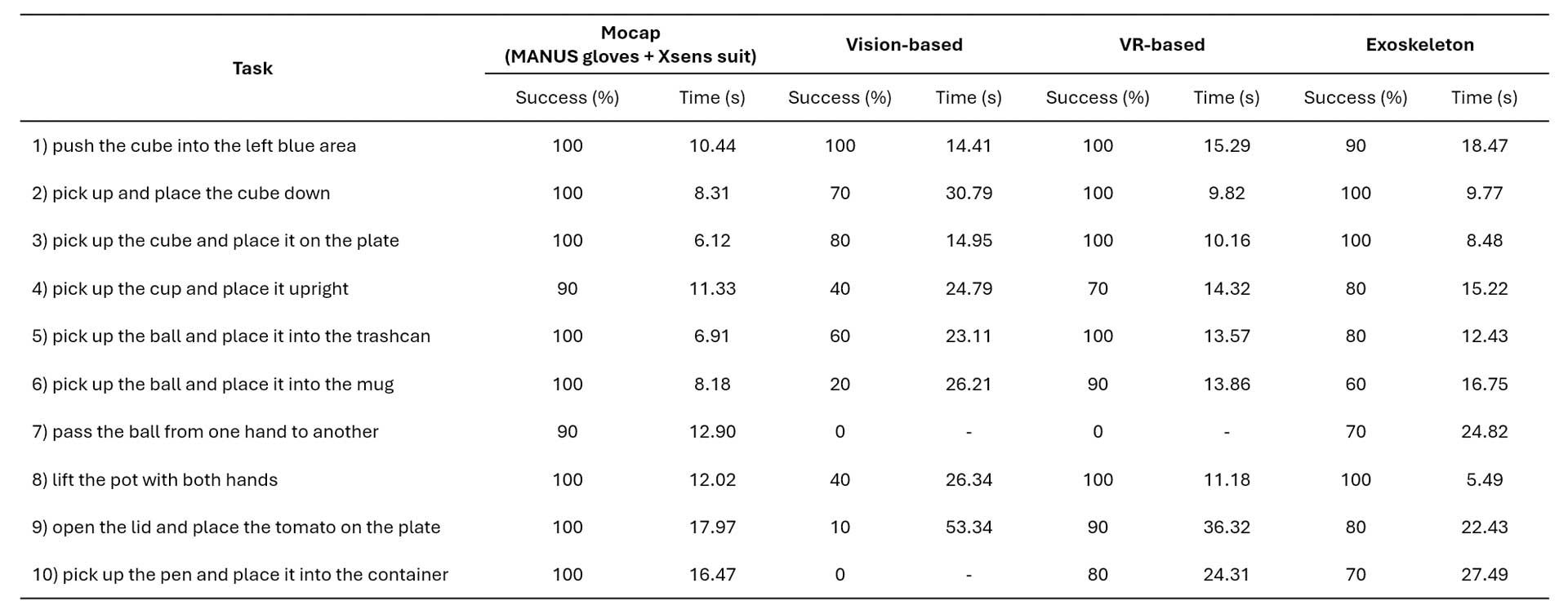

A 2025 study from Shanghai AI Laboratory addresses this gap by introducing TeleOpBench, a unified benchmark for evaluating dual-arm dexterous teleoperation. The benchmark runs consistent tasks in NVIDIA Isaac Sim and replicates them in real-world settings, using task success rate and completion time as primary evaluation metrics across both simulation and physical environments.

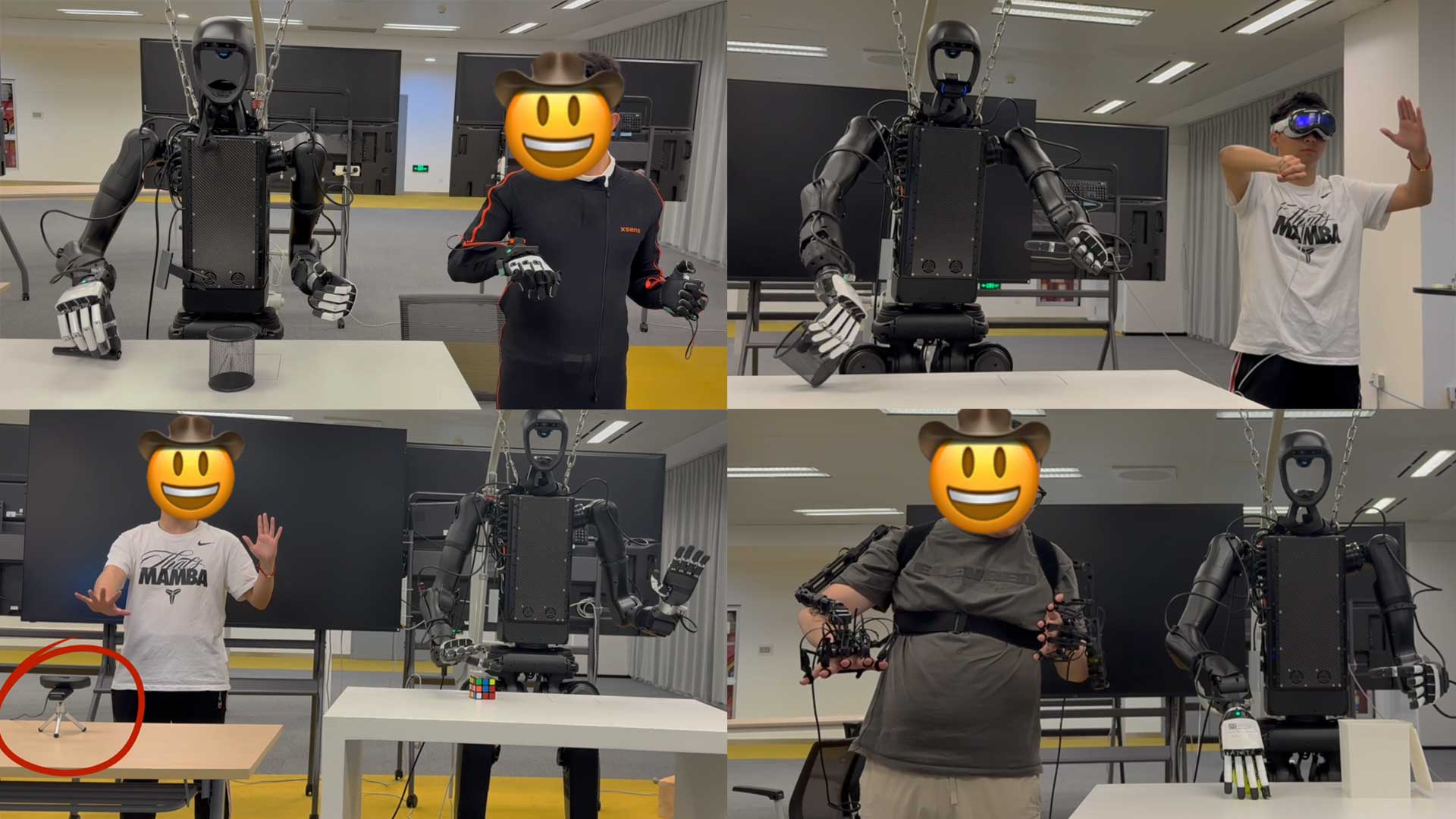

TeleOpBench compares four methods of capturing and transferring human motion to robots:

For the experiments, the four teleoperation interfaces are compared across 10 representative tasks of varying complexity on three commercial humanoid platforms (Unitree H1-2, Fourier GR1-T2, Unitree G1).

Across both simulation and real-world experiments, performance trends remain consistent. The motion capture pipeline using MANUS data gloves with Xsens MVN achieves the highest success rates and fastest completion times. It demonstrates superior precision in grasping, insertion, and bimanual coordination, with strong sim-to-real transfer.

Exoskeleton and VR systems perform reliably but show specific limitations such as slower gross arm movement or sensitivity to occlusion. Vision-based tracking performs adequately for simple tasks but struggles in complex, high-dexterity scenarios.

For embodied robot learning, the quality of human demonstrations directly affects policy performance and sim-to-real transfer. Small differences in finger articulation, motion smoothness, and coordination can significantly impact how well a learned manipulation policy performs in real-world tasks. High-resolution tracking is therefore essential for reliable robot learning.

The TeleOpBench results show that high-fidelity finger and limb tracking enabled by MANUS data gloves delivers the most accurate and efficient teleoperation data among the evaluated systems. By capturing detailed joint angles and smooth motion trajectories, the MANUS-based motion capture pipeline supports precise grasping, insertion, and bimanual coordination.